Yannick H.,

Too Long; Didn't Read

AI agents pursue goals instead of merely answering questions. That sounds like a revolution, but it only works for narrowly defined tasks with clean data and clear rules. Those who start with a small pilot project and honestly assess whether the data quality is sufficient can already achieve real benefits today.

Last autumn, I was sitting in a workshop with a client in Zurich. Mid-sized company, solid IT, a few ChatGPT pilots behind them. The IT manager leaned back and said something that has stuck with me ever since: "Honestly, I no longer understand what they’re trying to sell me. Last week it was still a chatbot. This week, the same thing is suddenly called an agent."

He was right. And yet he was also wrong. The terms are indeed being thrown around wildly, but behind the word "agent" lies something fundamentally different from a chatbot. The difference comes down to one single point: a chatbot answers questions. An agent pursues goals.

That sounds like a small nuance. It isn’t.

From the question to the goal

If you tell a chatbot, "Find me the latest invoice from supplier X," you get an answer. Maybe the right one, maybe not. But after that, it’s over. The chatbot waits for your next input.

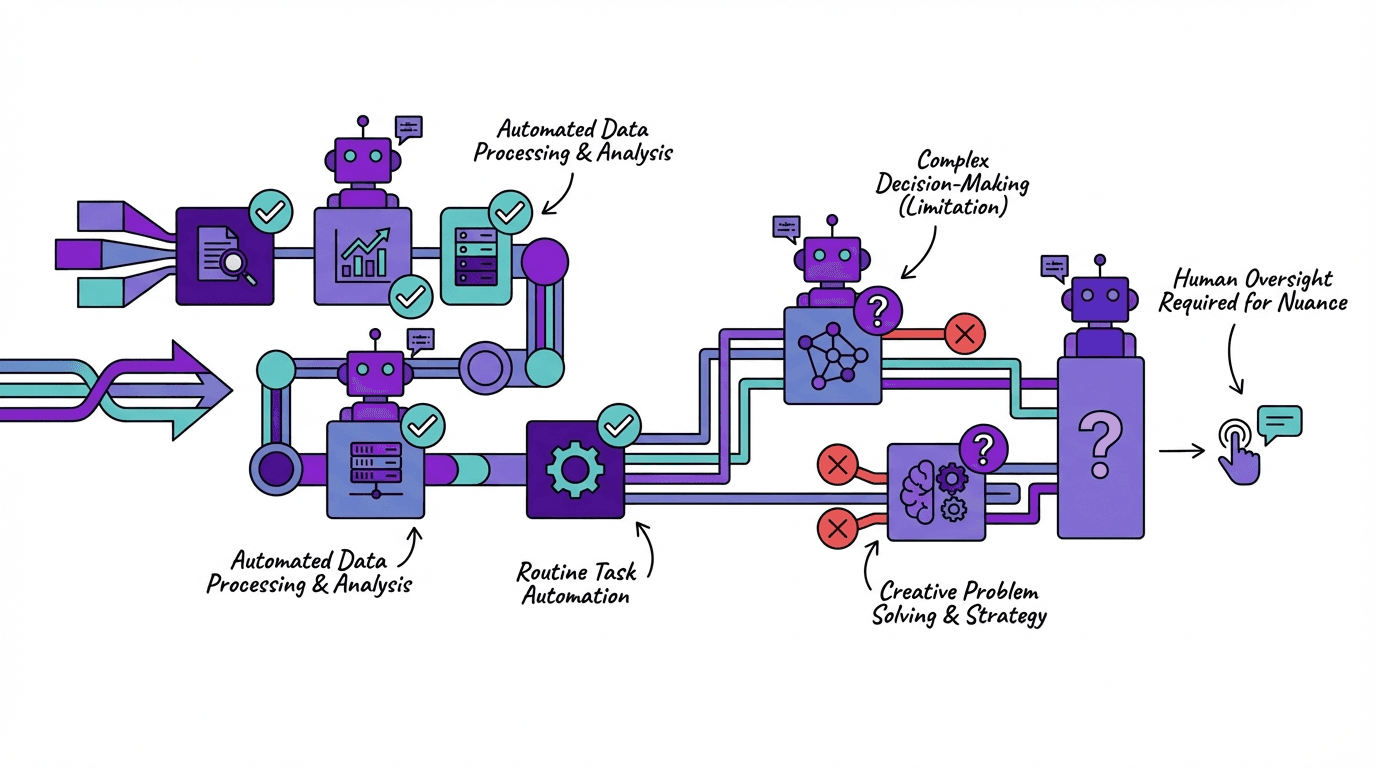

If you tell an AI agent, "Review all open supplier invoices, reconcile them with purchase orders, and flag discrepancies," something different happens. The agent breaks the goal down into steps. It accesses your ERP, retrieves invoice data, compares line items, and detects inconsistencies. If one system does not respond, it tries another route. It plans, executes, and corrects.

This is not a theoretical construct. We already see such setups in use at clients. However—and this is the part vendors like to leave out—it only works under certain conditions.

The conditions nobody likes to mention

In the Zurich workshop, we then listed together which processes might be suitable for a first agent pilot. The list was long. Then we validated it: Where is the data structured? Where can the goal be defined clearly enough? Where would an error be noticed before it causes damage?

The list became very short.

And that is exactly the reality we keep seeing in practice. AI agents work well for narrow, repetitive tasks with clear rules. The invoice verification example from earlier. A sales process in which a new lead is automatically enriched, evaluated, and provided with a suitable proposal template. Monitoring tasks where logs and metrics are continuously observed and actions are triggered when anomalies appear.

The shared characteristic: manageable context, structured data, observable outcomes.

Where the story turns

Back to the workshop. A department head asked whether an agent could also help evaluate customer projects. Strategic assessment, risk evaluation, decision on resource allocation. The idea was understandable. The answer was: Not yet.

Open, context-rich decisions are the terrain where agents regularly fail today. An agent cannot know that the customer sounded irritated on the phone. That the supply chain has been unstable for three months, but nobody documented it. That the relationship with the partner is delicate because a project went wrong last year. Such decisions require implicit knowledge, human judgment, and sometimes simply gut feeling.

On top of that comes a problem that occurs even more frequently: poor data quality. If your CRM is only half maintained and processes run informally, the agent will not perform miracles. It will fail—and quietly. An error in the background that no one notices for weeks, until the damage has accumulated.

The RPA question

Then in the workshop came the question we now hear in almost every conversation: "But we already have RPA. Do we now also need agents?"

Fair question. Short answer: probably both, but for different things.

RPA (i.e., UiPath, Automation Anywhere, and similar tools) follows fixed scripts. If nothing changes, RPA runs reliably and cost-effectively. As soon as a field is moved or an exception occurs, the bot breaks. AI agents are more robust with exceptions. They can replan and choose alternative paths. In return, they are more expensive, harder to test, and less predictable.

Most companies will use both technologies in parallel. RPA for stable standard processes, agents for more dynamic tasks. If someone tells you agents will replace RPA completely, I would ask them a few uncomfortable follow-up questions.

For anyone who has yet to begin with AI automation, our article on practical AI use in companies is a good starting point. And for those who want to check whether the fundamentals are in place before agents make sense: our AI readiness check helps with that.

What you can do tomorrow

By the way, the IT manager from the workshop launched his first agent pilot three weeks later. Not the big strategic vision, but something small: automated checking of incoming orders against stored framework agreements. Clearly defined, structured data, errors are noticed immediately.

That is the path we recommend. Pick a process you truly know. One that is repetitive and where an error will be noticed. Then look at the data quality, because that blocks half of all projects. And think in terms of "human in the loop" from the start—not as a fallback, but as a deliberate design decision.

For SMEs that are still at the beginning, we described in our article on AI in SMEs what the path from chaotic experiments to real business value looks like.

In summary

AI agents are not a polished-up chatbot and not a relabeled RPA tool. They pursue goals, plan steps, and self-correct. But they need narrow tasks, clean data, and people who review their results. Those who start with a small, clearly defined pilot and assess data quality honestly can achieve real value today. Those waiting for the big revolution will probably be waiting a while longer.

If you want to look specifically at which processes in your company are suitable for a first agent pilot, we’d be happy to discuss it. No sales pitch, just an honest assessment.