Franco T.,

Too Long; Didn't Read

Über die Hälfte der KI-Nutzer in Unternehmen verwenden Tools, die nicht offiziell freigegeben sind. Ein pragmatisches KI-Governance-Framework lässt sich in vier Wochen aufbauen.

Stell dir vor: Dein Vertriebsteam nutzt ChatGPT, um Angebotstexte mit Kundendaten zu generieren. Deine HR-Abteilung lässt Bewerbungen von einem KI-Tool vorsortieren. Und dein Marketing erstellt mit Copilot Inhalte, die niemand auf Halluzinationen prüft.

Weisst du davon? Hast du das erlaubt? Gibt es Regeln dafür?

Wenn du bei mindestens einer dieser Fragen zögerst, bist du nicht allein. Wir sehen das bei praktisch jedem Schweizer KMU, das wir beraten. KI-Tools sind da. Die Mitarbeitenden nutzen sie. Aber die Governance? Die fehlt.

Shadow AI: Das unsichtbare Risiko

"Shadow AI" ist der kleine Bruder von Shadow IT — nur mit grösserem Schadenspotenzial. Mitarbeitende nutzen KI-Tools auf eigene Faust, weil sie produktiver sein wollen. Verständlich. Aber die Konsequenzen sind real.

Laut einer Salesforce-Studie (2024) nutzen über 50% der KI-Anwender im Unternehmen Tools, die nicht offiziell freigegeben sind. Und Cyberhaven hat 2024 analysiert, dass rund 11% der Daten, die in ChatGPT eingefügt werden, vertraulich sind. Kundendaten, Finanzzahlen, Strategiepapiere — alles landet bei einem Drittanbieter.

Das ist kein theoretisches Risiko. Samsung hat 2023 intern ChatGPT verboten, nachdem Ingenieure propryetären Quellcode und interne Besprechungsnotizen in den Chatbot eingefügt hatten. Und ein New Yorker Anwaltsteam wurde 2023 sanktioniert, weil es KI-generierte, nicht existierende Gerichtsurteile in einem Verfahren zitiert hatte.

(Wir kennen kein Schweizer KMU, das absichtlich Kundendaten an OpenAI schickt. Aber wir kennen viele, bei denen es trotzdem passiert — einfach weil es keine Regel dagegen gibt.)

Warum jetzt? Der regulatorische Druck wächst

Zwei Entwicklungen machen KI-Governance von "nice to have" zu "muss jetzt sein":

Der EU AI Act ist Realität. Das weltweit erste umfassende KI-Gesetz trat im August 2024 in Kraft. Die Umsetzungsfristen laufen gestaffelt: Verbotene Praktiken seit Februar 2025, Regeln für General-Purpose AI ab August 2025, vollständige Anwendung für Hochrisiko-Systeme ab August 2026. Die Strafen? Bis zu 35 Millionen Euro oder 7% des globalen Jahresumsatzes.

"Betrifft uns als Schweizer Unternehmen nicht" — das hören wir oft. Stimmt aber nicht. Der EU AI Act hat extraterritoriale Wirkung. Wenn dein KI-System auf dem EU-Markt eingesetzt wird oder dessen Output in der EU genutzt wird, bist du betroffen. Und fast jedes Schweizer Unternehmen mit EU-Kunden fällt in diese Kategorie.

Das nDSG verlangt Transparenz. Das neue Schweizer Datenschutzgesetz (in Kraft seit September 2023) verlangt Transparenz über automatisierte Entscheidungsprozesse und gibt Betroffenen das Recht auf menschliche Überprüfung. Wenn dein HR-Tool KI-gestützt Bewerbungen vorsortiert und du das nicht offenlegst, ist das ein Problem.

Zusammengenommen: Die regulatorische Landschaft hat sich verschoben. KI ohne Governance ist nicht nur riskant — es wird zunehmend rechtswidrig.

Was ein KI-Governance-Framework beinhaltet (ohne Bürokratie-Monster)

Hier ist die gute Nachricht: KI-Governance für ein KMU muss kein 200-seitiges Regelwerk sein. Wir arbeiten mit einem Drei-Säulen-Modell, das pragmatisch genug ist, um in vier Wochen aufgesetzt zu werden — und robust genug, um die wesentlichen Risiken abzudecken.

Säule 1: KI-Nutzungsrichtlinien

Das Fundament. Eine klare, verständliche Policy, die regelt:

Welche KI-Tools sind erlaubt? Definiere eine "Approved List" von freigegebenen Tools. Alles andere ist tabu, bis es geprüft wurde.

Welche Daten dürfen rein? Klare Kategorisierung: Öffentliche Daten — ja. Interne Daten — nur mit zugelassenen Enterprise-Versionen. Kundendaten und vertrauliche Informationen — nie in Drittanbieter-Tools ohne Datenverarbeitungsvertrag.

Wie wird Output geprüft? Jeder KI-generierte Output, der in Entscheidungen, Kommunikation oder Dokumente einfliesst, braucht eine menschliche Überprüfung. Vier-Augen-Prinzip für alles, was nach aussen geht.

Wer ist verantwortlich? Definiere einen KI-Verantwortlichen (muss kein Vollzeit-Job sein) und klare Eskalationswege.

Klingt machbar? Ist es auch. Die meisten Unternehmen haben das in einer Woche stehen — wenn jemand den Anstoss gibt.

Säule 2: KI-Risikobewertung

Nicht jedes KI-Tool ist gleich riskant. Ein Textgenerator für Marketing-Texte ist etwas anderes als ein KI-gestütztes System, das Kreditentscheidungen unterstützt. Die Risikobewertung sortiert deine KI-Anwendungen nach Risikokategorie:

Niedriges Risiko: Interne Produktivitätstools ohne sensible Daten (z.B. Zusammenfassungen von Meetings, Textentwürfe ohne Kundenbezug)

Mittleres Risiko: Tools mit internen Daten oder Kundenkontakt (z.B. Chatbots, Angebotsassistenten, Analysewerkzeuge)

Hohes Risiko: Systeme, die in Entscheidungen über Personen einfliessen (z.B. HR-Screening, Kreditprüfung, Sicherheitsüberwachung)

Für jede Kategorie definierst du angemessene Kontrollen. Nicht alles braucht den gleichen Aufwand — und genau das macht den Ansatz pragmatisch.

Säule 3: Monitoring und Kontrolle

Governance ohne Monitoring ist wie ein Sicherheitsgurt ohne Schloss. Du brauchst:

Ein KI-Inventar: Welche KI-Tools werden im Unternehmen eingesetzt? (Du wirst überrascht sein, wie viele es sind.)

Regelmässige Reviews: Quartalmässige Überprüfung, ob die Richtlinien eingehalten werden und ob neue Tools aufgetaucht sind

Incident-Prozess: Was passiert, wenn trotzdem vertrauliche Daten in ein nicht autorisiertes Tool gelangen? Ein klarer Ablauf verhindert Panik-Reaktionen

Schulung und Awareness: Die besten Richtlinien nützen nichts, wenn niemand sie kennt. Kurze, regelmässige Trainings (nicht ein jährliches 3-Stunden-Compliance-Training, das jeder weiterklickt)

In 4 Wochen zum KI-Governance-Framework

Klingt ambitioniert? Ist machbar. Hier ist der Fahrplan, den wir bei unseren Kunden einsetzen:

Woche 1: Bestandsaufnahme. Inventarisiere alle KI-Tools, die im Einsatz sind. Frag aktiv nach — nicht nur die IT-Abteilung, sondern alle Teams. (Ein Tipp: Frag nicht "Nutzt ihr KI?", sondern "Welche KI-Tools nutzt du?". Die Antworten sind aufschlussreicher.)

Woche 2: Richtlinien entwerfen. Erstelle eine KI-Nutzungsrichtlinie auf Basis der Bestandsaufnahme. Definiere erlaubte Tools, Datenkategorien und Prüfprozesse. Halte es auf 3-5 Seiten — mehr liest niemand.

Woche 3: Risikobewertung durchführen. Kategorisiere die identifizierten Tools nach Risikostufe. Definiere Kontrollen pro Kategorie. Priorisiere: Hochrisiko-Anwendungen zuerst.

Woche 4: Monitoring aufsetzen und kommunizieren. Etabliere den Review-Prozess, definiere den Incident-Ablauf, und — das Wichtigste — kommuniziere die Governance an alle Mitarbeitenden. Kurzes Onboarding, klare Erwartungen.

Das ist kein perfektes Framework. Aber es ist ein funktionierendes. Und ein funktionierendes Framework in vier Wochen schlägt ein perfektes in zwölf Monaten.

Governance ist kein Bremsklotz — sondern ein Enabler

Hier ist der Punkt, den viele übersehen: Die Unternehmen, die KI-Governance ernst nehmen, innovieren schneller, nicht langsamer.

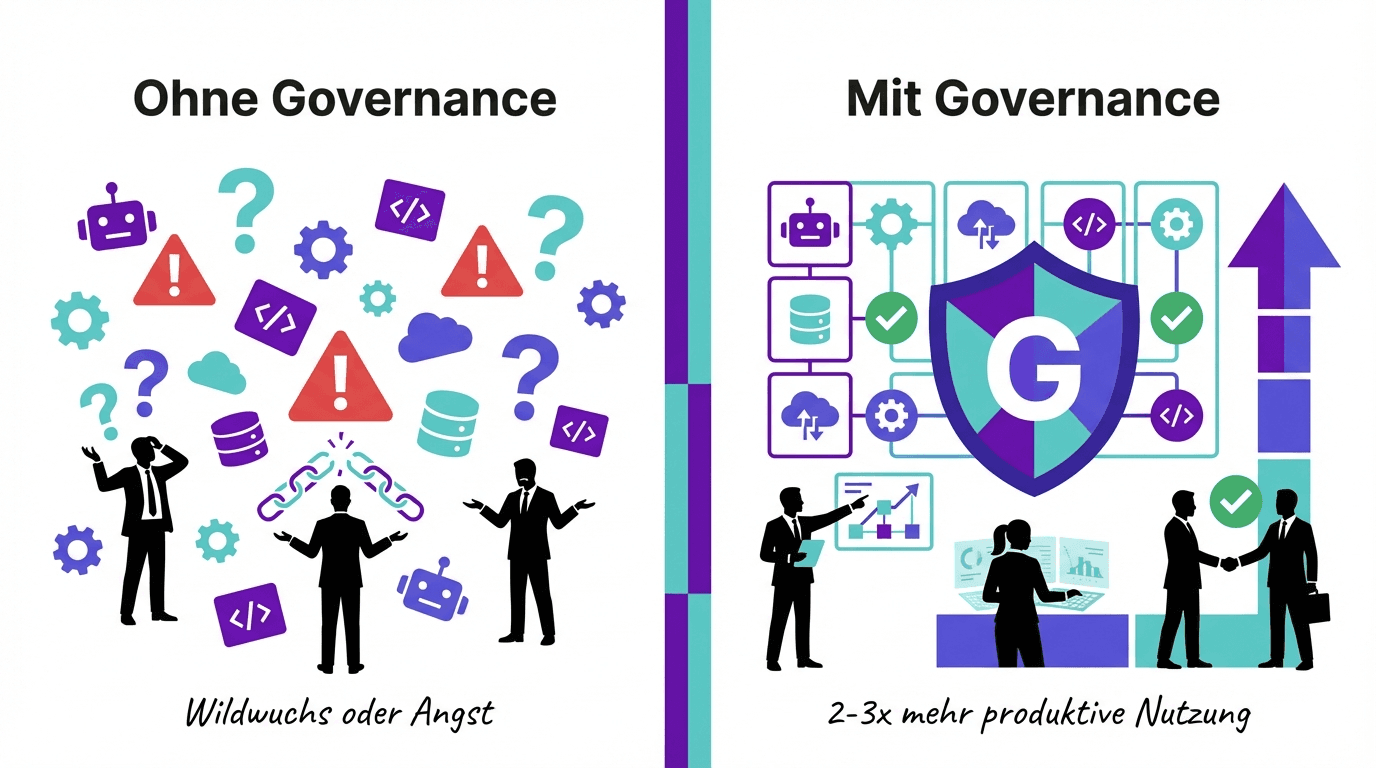

Warum? Weil klare Regeln Unsicherheit beseitigen. Wenn Mitarbeitende wissen, welche Tools sie nutzen dürfen und welche Daten erlaubt sind, nutzen sie KI produktiver. Ohne Richtlinien herrscht entweder Wildwuchs (riskant) oder Zurückhaltung aus Angst (verpasste Chancen). Beides kostet.

Wir haben bei unseren Kunden gesehen, dass eine klare KI-Policy die produktive KI-Nutzung um den Faktor zwei bis drei steigert — einfach weil die Hemmschwelle sinkt, wenn der Rahmen klar ist.

Der nächste Schritt

Du musst nicht morgen ein perfektes KI-Governance-Framework haben. Aber du solltest morgen mit einem Schritt starten:

Finde heraus, welche KI-Tools in deinem Unternehmen genutzt werden. Frag dein Team. Die Antworten werden dich überraschen — und dir zeigen, wo der dringendste Handlungsbedarf liegt.

(Wir helfen Schweizer Unternehmen, KI-Governance pragmatisch und vendor-neutral aufzubauen — nicht als Compliance-Übung, sondern als Grundlage für sichere Innovation.)